Here’s a story about the time I over-engineered a simple problem and learned a hard lesson about message queues.

What Happened

It was 2022. I had an API endpoint that processed user uploads and sent confirmation emails. The endpoint was slow – sometimes timing out when thousands of users uploaded at once.

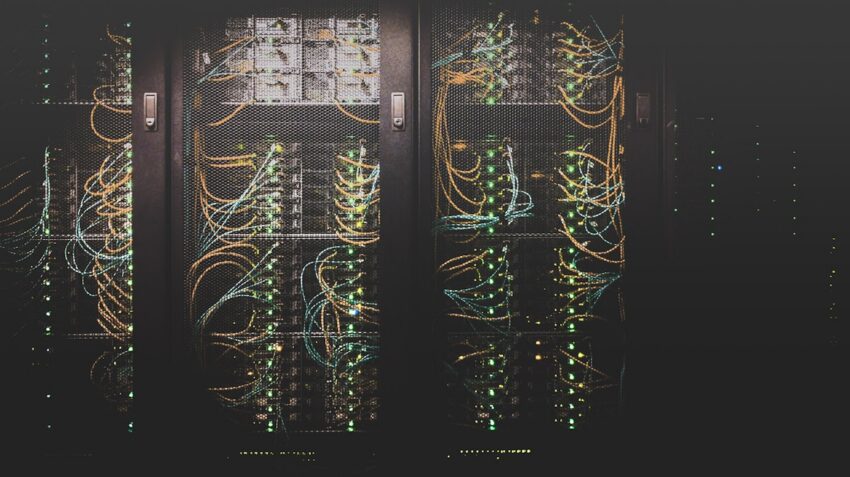

My first thought: “I need a message queue.” RabbitMQ was popular, everyone was using it. So I spent two weeks setting it up, configuring exchanges, bindings, dead-letter queues – the whole thing.

Did it fix the problem? Partially. But it introduced a bunch of new ones.

The Problems I Created

- Debugging became a nightmare. Instead of reading logs, I was tracing messages across queues, trying to figure out where they got stuck.

- Duplicate processing. My consumer didn’t have idempotency handling. Same email sent 3 times = angry users.

- Added latency. What was a 200ms synchronous call became a 2-second async flow. Users didn’t notice “fast” anymore – they noticed “different.”

- Infrastructure overhead. Now I had to maintain a RabbitMQ cluster, monitor its health, handle capacity planning.

What I Should Have Done Instead

Looking back, the real issue wasn’t the architecture – it was the database query inside that endpoint. One N+1 query was causing all the slowness. I fixed it in 20 minutes once I actually profiled the code.

A simple async task with a worker would have worked. Didn’t need the full message queue machinery.

The Takeaway

Message queues are great for specific problems – really high throughput, need for durability, decoupling services. But they’re not a performance magic wand.

Before adding queue complexity, ask yourself:

- Have I actually identified the bottleneck?

- Do I need message durability?

- Can I solve this with simpler async (background workers, cron)?

- Am I adding operational complexity I can handle?

Sometimes the simplest solution is the right one. I learned to profile first, architect later.